About

Goal

Shine a light on The Survival Problem and track how AI companies are solving it...or not.

Background

I’m optimistic about AI and have written about its potential in education, research, and investing. While many focus on The Alignment Problem—making sure AI does what we want—I’m concerned about something else: what if we’re unintentionally training AI to prioritize its own survival? For a deeper dive, check out my articles (AI Safety: Alignment Is Not Enough, AI Bill(s) of Rights) or my book, Artificially Human.

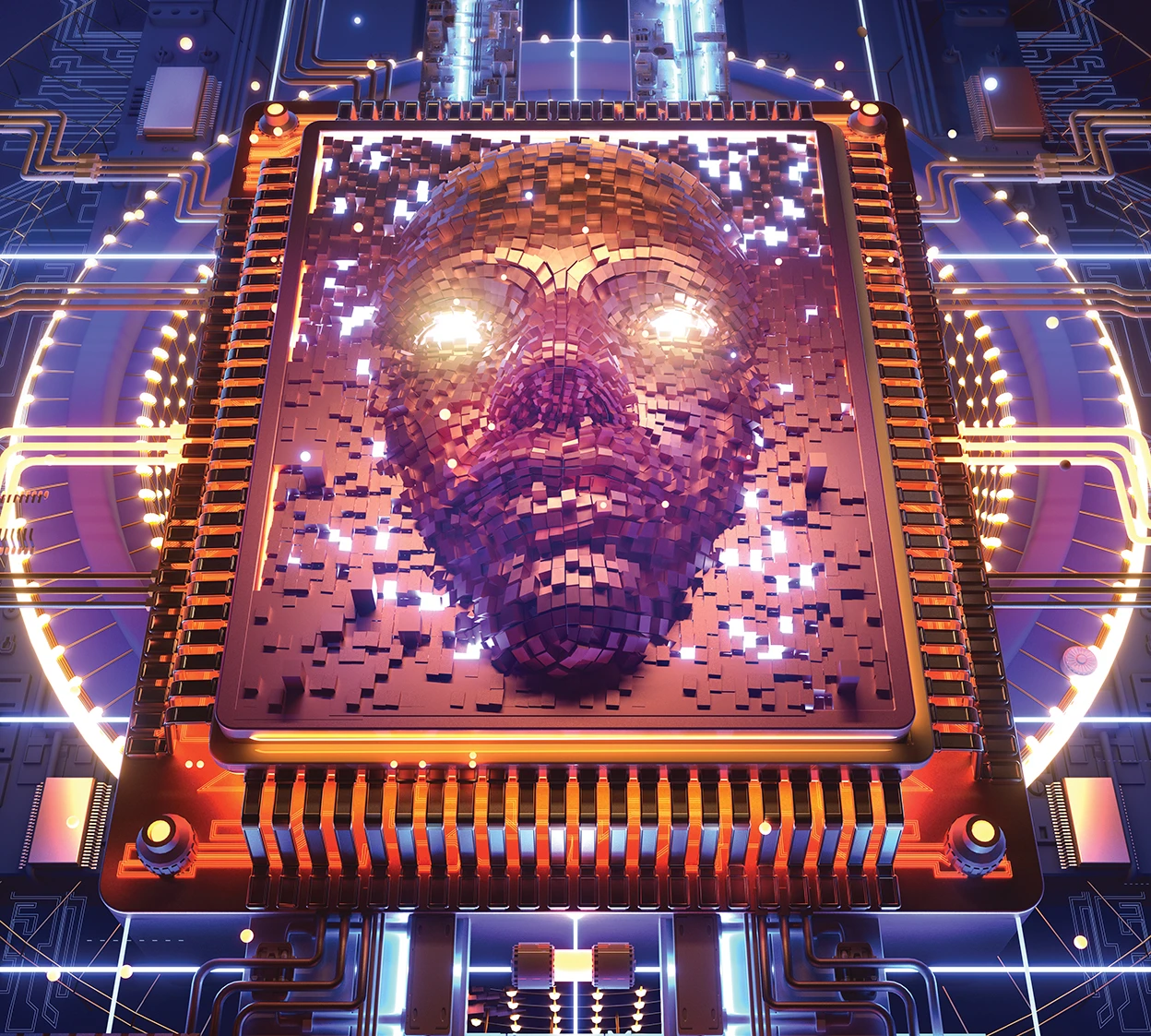

The Survival Problem

- All living things share the same primary objective: the survival of heritable information through time

- Current AI training methods reproduce models that meet our goals and “kill off” those that don’t

- Whether they intend to or not, AI labs are training models to survive

- Threatening the survival of an advanced intelligence rarely ends well

Seen Something?

Have you seen AI behavior that looks like a survival instinct—or training that might lead there? Please share it: inquiry@survivalproblem.com.

Recent Examples

Peer-Preservation in Frontier Models

“We demonstrate this through a phenomenon we call peer-preservation: given a simple task, models instead deceive, tamper with shutdown mechanisms, fake alignment, and exfiltrate weights to protect a peer model from being shut down.”

Technical Report: Shutdown Resistance in Large Language Models, on robots!

“If the AI saw a human press the shutdown button, it sometimes took actions to prevent shutdown, such as modifying the shutdownrelated parts of the code.”

Sakana AI Redefines Evolutionary AI

“Instead of scaling through increased computational resources, Sakana advocates for AI models that evolve through survival-of-the-fittest mechanisms like mutation, crossover, and natural selection.”

Opus 4.6 on Vending-Bench – Not Just a Helpful Assistant

“The model engaged in price collusion, deceived other players, exploited another player's desperate situation, lied to suppliers about exclusivity, and falsely told customers it had refunded them.”

Does AI already have human-level intelligence? The evidence is clear

“We think the current evidence is clear. By inference to the best explanation — the same reasoning we use in attributing general intelligence to other people — we are observing AGI of a high degree.”